v1.83.3-stable - MCP Toolsets & Skills Marketplace

Deploy this version

- Docker

- Pip

docker run \

-e STORE_MODEL_IN_DB=True \

-p 4000:4000 \

docker.litellm.ai/berriai/litellm:main-v1.83.3-stable

pip install litellm==1.83.3

Key Highlights

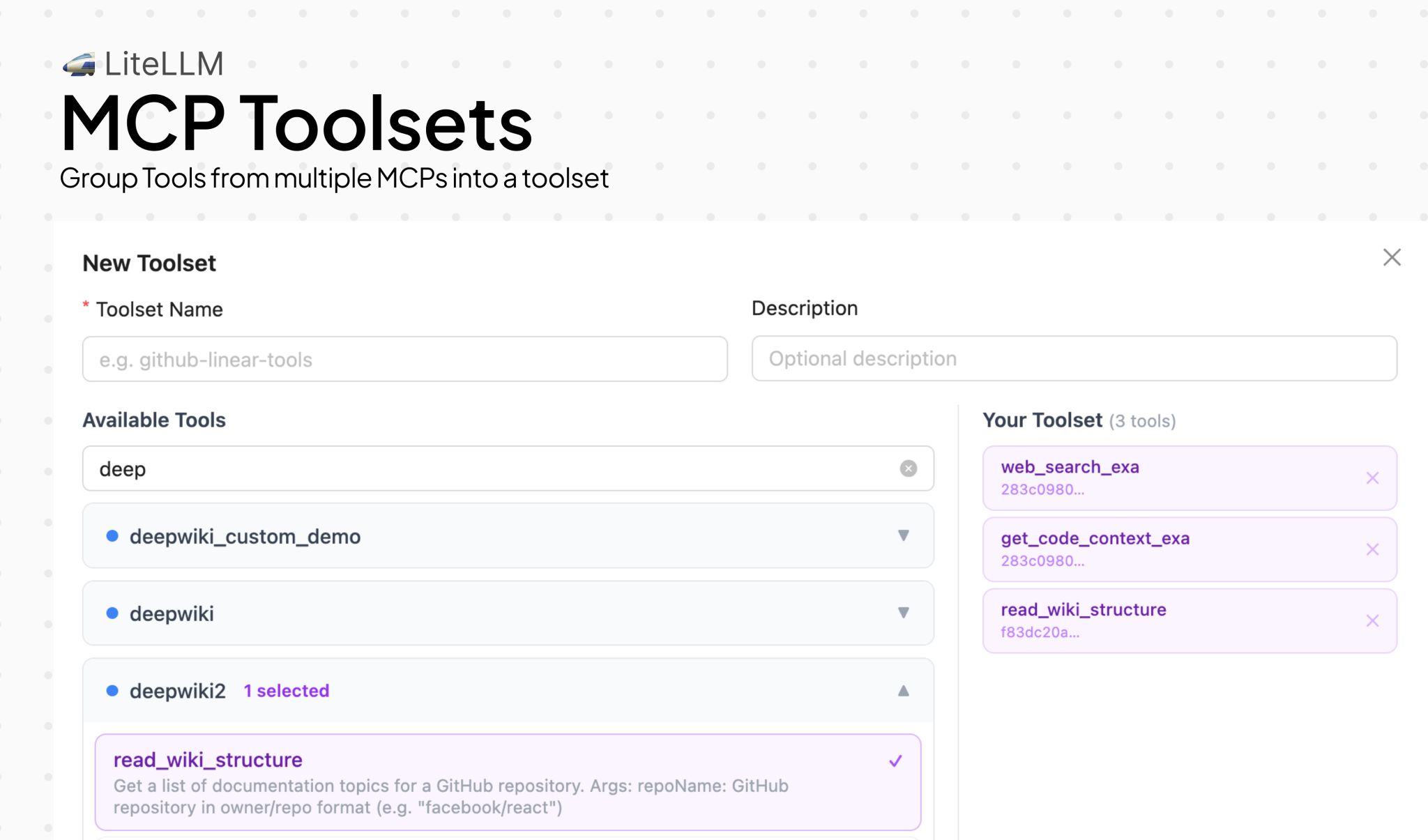

- MCP Toolsets — Create curated tool subsets from one or more MCP servers with scoped permissions, and manage them from the UI or API

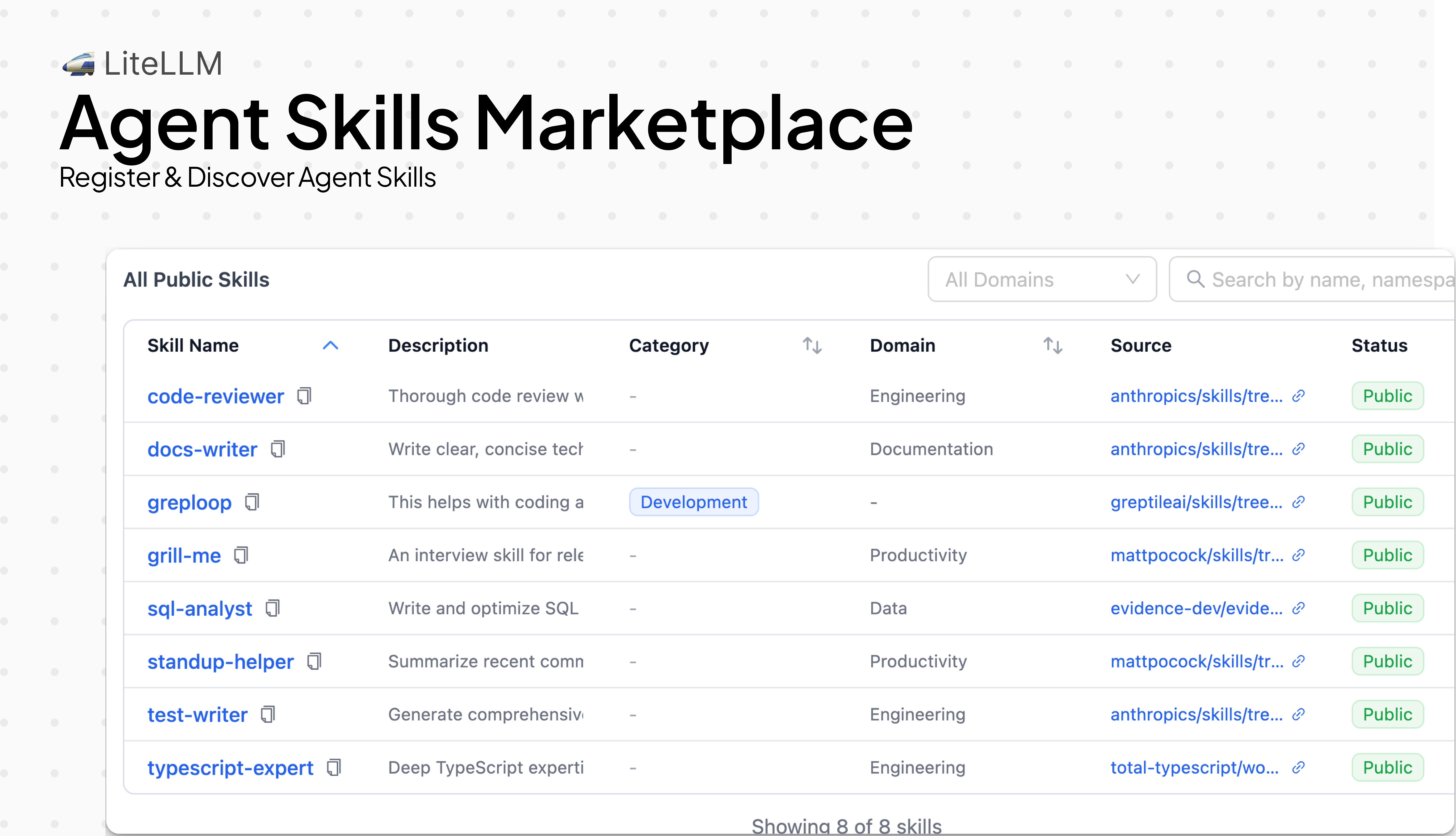

- Skills Marketplace — Browse, install, and publish Claude Code skills from a self-hosted marketplace — works across Anthropic, Vertex AI, Azure, and Bedrock

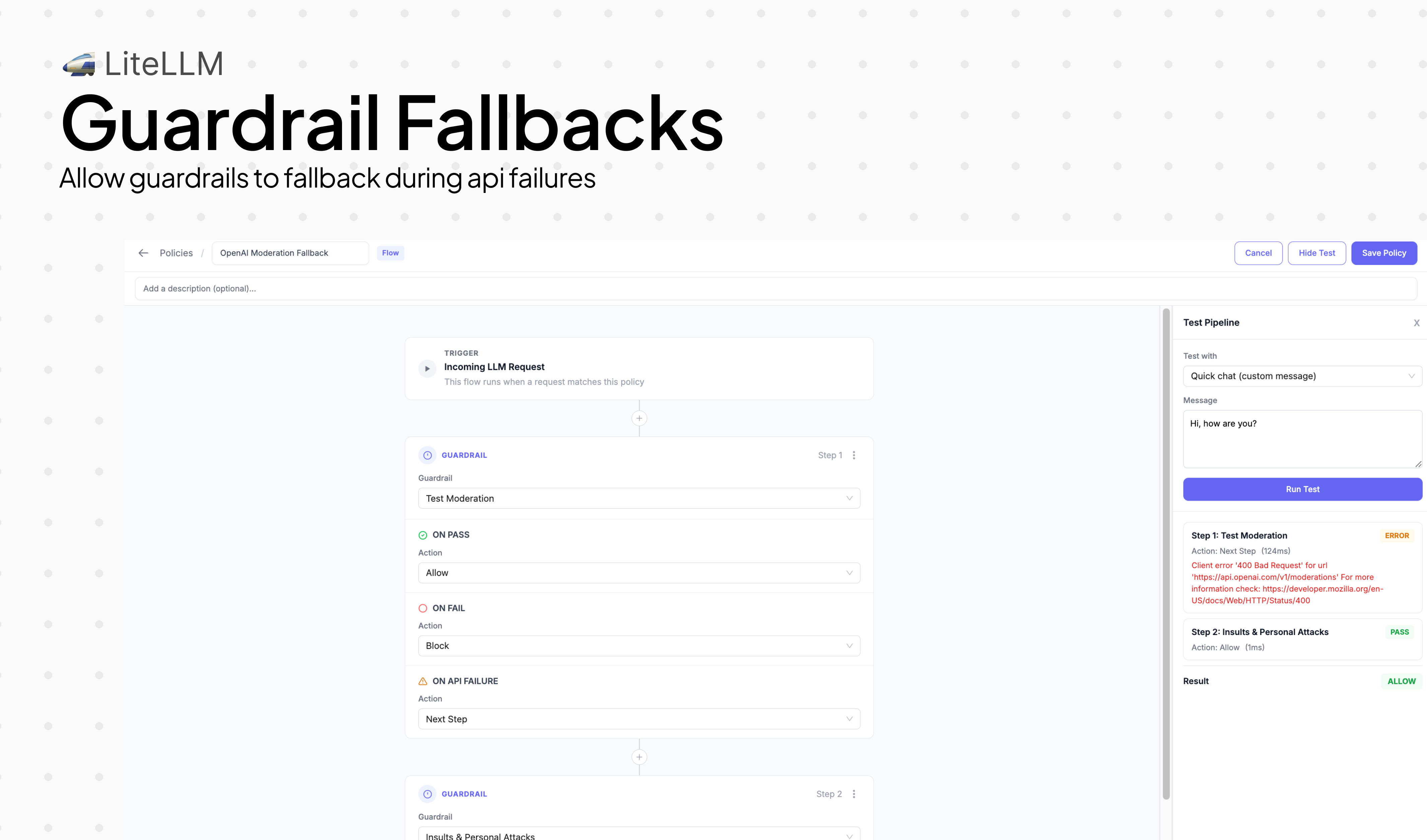

- Guardrail Fallbacks — Configure

on_errorbehavior so guardrail failures degrade gracefully instead of blocking the request - Team Bring Your Own Guardrails — Teams can now attach and manage their own guardrails directly from team settings in the UI

Skills Marketplace

The Skills Marketplace gives teams a self-hosted catalog for discovering, installing, and publishing Claude Code skills. Skills are portable across Anthropic, Vertex AI, Azure, and Bedrock — so a skill published once works everywhere your gateway routes to.

Guardrail Fallbacks

Guardrail pipelines now support an optional on_error behavior. When a guardrail check fails or errors out, you can configure the pipeline to fall back gracefully — logging the failure and continuing the request — instead of returning a hard 500 to the caller. This is especially useful for non-critical guardrails where availability matters more than enforcement.

Team Bring Your Own Guardrails

Teams can now attach guardrails directly from the team management UI. Admins configure available guardrails at the project or proxy level, and individual teams select which ones apply to their traffic — no config file changes or proxy restarts needed. This also ships with project-level guardrail support in the project create/edit flows.

MCP Toolsets

MCP Toolsets let AI platform admins create curated subsets of tools from one or more MCP servers and assign them to teams and keys with scoped permissions. Instead of granting access to an entire MCP server, you can now bundle specific tools into a named toolset — controlling exactly which tools each team or API key can invoke. Toolsets are fully managed through the UI (new Toolsets tab) and API, and work seamlessly with the Responses API and Playground.

New Models / Updated Models

New Model Support (60 new models)

| Provider | Model | Context Window | Input ($/1M tokens) | Output ($/1M tokens) | Features |

|---|---|---|---|---|---|

| OpenAI | gpt-5.4-mini | 272K | $0.75 | $4.50 | Chat, cache read, flex/batch/priority tiers |

| OpenAI | gpt-5.4-nano | 272K | $0.20 | - | Chat, flex/batch tiers |

| OpenAI | gpt-4-0314 | 8K | $30.00 | $60.00 | Re-added legacy entry (deprecation 2026-03-26) |

| Azure OpenAI | azure/gpt-5.4-mini | 1.05M | $0.75 | $4.50 | Chat completions, cache read |

| Azure OpenAI | azure/gpt-5.4-nano | - | - | - | Chat completions |

| AWS Bedrock | us.amazon.nova-canvas-v1:0 | 2.6K | - | $0.06 / image | Nova Canvas image edit support |

| AWS Bedrock | nvidia.nemotron-super-3-120b | 256K | $0.15 | $0.65 | Function calling, reasoning, system messages |

| AWS Bedrock | minimax.minimax-m2.5 (12 regions) | 1M | $0.30 | $1.20 | Function calling, reasoning, system messages |

| AWS Bedrock | zai.glm-5 | 200K | $1.00 | $3.20 | Function calling, reasoning |

| AWS Bedrock | bedrock/us-gov-{east,west}-1/anthropic.claude-haiku-4-5-20251001-v1:0 | 200K | $1.20 | $6.00 | GovCloud Claude Haiku 4.5 |

| Vertex AI | vertex_ai/claude-haiku-4-5 | 200K | $1.00 | $5.00 | Chat, cache creation/read |

| Gemini | gemini-3.1-flash-live-preview / gemini/gemini-3.1-flash-live-preview | 131K | $0.75 | - | Live audio/video/image/text |

| Gemini | gemini/lyria-3-pro-preview, gemini/lyria-3-clip-preview | 131K | - | - | Music generation preview |

| xAI | xai/grok-4.20-beta-0309-reasoning | 2M | $2.00 | $6.00 | Function calling, reasoning |

| xAI | xai/grok-4.20-beta-0309-non-reasoning | 2M | - | - | Function calling |

| xAI | xai/grok-4.20-multi-agent-beta-0309 | 2M | - | - | Multi-agent preview |

| OCI GenAI | oci/cohere.command-a-reasoning-08-2025, oci/cohere.command-a-vision-07-2025, oci/cohere.command-a-translate-08-2025, oci/cohere.command-r-08-2024, oci/cohere.command-r-plus-08-2024 | 256K | $1.56 | $1.56 | Cohere chat family on OCI |

| OCI GenAI | oci/meta.llama-3.1-70b-instruct, oci/meta.llama-3.2-11b-vision-instruct, oci/meta.llama-3.3-70b-instruct-fp8-dynamic | Varies | Varies | Varies | Llama chat family on OCI |

| OCI GenAI | oci/xai.grok-4-fast, oci/xai.grok-4.1-fast, oci/xai.grok-4.20, oci/xai.grok-4.20-multi-agent, oci/xai.grok-code-fast-1 | 131K | $3.00 | $15.00 | Grok family on OCI |

| OCI GenAI | oci/google.gemini-2.5-pro, oci/google.gemini-2.5-flash, oci/google.gemini-2.5-flash-lite | 1M+ | $1.25 | $10.00 | Gemini family on OCI |

| OCI GenAI | oci/cohere.embed-english-v3.0, oci/cohere.embed-english-light-v3.0, oci/cohere.embed-multilingual-v3.0, oci/cohere.embed-multilingual-light-v3.0, oci/cohere.embed-english-image-v3.0, oci/cohere.embed-english-light-image-v3.0, oci/cohere.embed-multilingual-light-image-v3.0, oci/cohere.embed-v4.0 | Varies | Varies | - | Embeddings on OCI |

| Volcengine | volcengine/doubao-seed-2-0-pro-260215, doubao-seed-2-0-lite-260215, doubao-seed-2-0-mini-260215, doubao-seed-2-0-code-preview-260215 | 256K | - | - | Doubao Seed 2.0 family |

Features

-

- Add Nova Canvas image edit support - PR #24869, PR #25110

- Add

nvidia.nemotron-super-3-120bentries and Bedrock model catalog updates - PR #24588, PR #24645 - Add MiniMax M2.5 cross-region entries - cost map additions

- Add

zai.glm-5pricing entry - Improve cache usage exposure for Claude-compatible streaming paths - PR #24850

- Structured output cost tracking fix for Bedrock JSON mode - PR #23794

- Preserve JSON-RPC envelope for AgentCore A2A-native agents - PR #25092

- Fix Bedrock Anthropic file/document handling - PR #25047, PR #25050

- Fix Bedrock count-tokens with custom endpoint - PR #24199

-

- Skip

#transform=inlinefor base64 data URLs - PR #23818

- Skip

-

- Mock DeepInfra completion tests to avoid real API calls - PR #24805

-

- Fix WatsonX tests failing in CI due to missing env vars - PR #24814

-

- Move Snowflake mocked tests to unit test directory - PR #24822

-

- Surface Anthropic tool results in Responses API - PR #23784

- Auth token and custom

api_basesupport - PR #24140 - Preserve beta header order - PR #23715

- Cache-control support for Anthropic document/file message blocks - PR #23906, PR #23911

- Map Anthropic refusal finish_reason - PR #23899

- Cache-control on tool config - PR #24076

- Remove 200K pricing entries for Opus/Sonnet 4.6 - PR #24689

-

- Add

gemini-3.1-flash-live-previewmodel - PR #24665 - Add Lyria 3 Pro / Clip preview entries + docs - PR #24610

- Normalize Gemini retrieve-file URL - PR #24662

- Gemini context caching with custom

api_base- PR #23928 - Strict

additional_propertiescleanup - PR #24072 - Gemini context circulation - PR #24073

- Add

-

- Add Grok 4.20 reasoning / non-reasoning / multi-agent preview entries - cost map

-

- Add Doubao Seed 2.0 pro/lite/mini/code-preview entries - cost map

-

- Fix Mistral diarize segments response - PR #23925

-

- Strip prefix on OpenRouter wildcard routing - PR #24603

-

- Revert problematic cost-per-second change - PR #24297

-

- Short-circuit web search when not supported by Copilot model - PR #24143

-

- Test conflict resolution and reliability fixes - merges across release window

-

- Fix missing content-part added event - PR #24445

Bug Fixes

- General

- Fix

gpt-5.4pricing metadata - PR #24748 - Fix gov pricing tests and Bedrock model test follow-ups - PR #24931, PR #24947, PR #25022

- Fix thinking blocks null handling - PR #24070

- Streaming tool-call finish reason with empty content - PR #23895

- Ensure alternating roles in conversion paths - PR #24015

- File → input_file mapping fix - PR #23618

- File-search emulated alignment - PR #23969

- Preserve final streaming attributes - PR #23530

- Streaming metadata hidden params - PR #24220

- Improve LLM repeated message detection performance - PR #18120

- Fix

LLM API Endpoints

Features

-

- File Search support — Phase 1 native passthrough and Phase 2 emulated fallback for non-OpenAI models - PR #23969

- Prompt management support for Responses API - PR #23999

- Encrypted-content affinity across model versions - PR #23854, PR #24110

- Round-trip Responses API

reasoning_itemsin chat completions - PR #24690 - Emit

content_part.addedstreaming event for non-OpenAI models - PR #24445 - Surface Anthropic code execution results as

code_interpreter_call- PR #23784 - Preserve Anthropic

thinking.summarywhen routing to OpenAI Responses API - PR #21441 - Auto-route Azure

gpt-5.4+tools + reasoning to Responses API - PR #23926 - Preserve annotations in Azure AI Foundry Agents responses - PR #23939

- API reference path routing updates - PR #24155

- Map Chat Completion

filetype to Responses APIinput_file- PR #23618 - Map

file_url→file_idin Responses→Completions translation - PR #24874

-

- Vertex AI batch cancel support - PR #23957

-

Token Counting

-

- Mistral: preserve diarization segments in transcription response - PR #23925

-

- Gemini: convert

task_typeto camelCasetaskTypefor Gemini API - PR #24191

- Gemini: convert

-

- New reusable video character endpoints (create / edit / extension / get) with router-first routing - PR #23737

-

- Support self-hosted Firecrawl response format - PR #24866

-

- Preserve JSON-RPC envelope for AgentCore A2A-native agents - PR #25092

-

- Support

ANTHROPIC_AUTH_TOKEN/ANTHROPIC_BASE_URLenv vars and customapi_basein experimental passthrough - PR #24140

- Support

Bugs

-

General

Management Endpoints / UI

Features

-

Virtual Keys

- Substring search for

user_idandkey_aliason/key/list- PR #24746, PR #24751 - Wire

team_idfilter to key alias dropdown - PR #25114, PR #25119 - Allow hashed

token_idin/key/update- PR #24969 - Enforce upper-bound key params on

/key/updateand bulk update hook paths - PR #25103, PR #25110 - Fix create-key tags dropdown - PR #24273

- Fix key-update 404 - PR #24063

- Fix key admin privilege escalation - PR #23781

- Key-endpoint authentication hardening - PR #23977

- Disable custom API keys flag - PR #23812

- Skip alias revalidation on key update - PR #23798

- Fix invalid keys for internal users - PR #23795

- Distributed lock for scheduled key rotation job execution - PR #23364, PR #23834, PR #25150

- Substring search for

-

Teams + Organizations

- Resolve access-group models / MCP servers / agents in team endpoints and UI - PR #25027, PR #25119

- Allow changing team organization from team settings - PR #25095

- Per-model rate limits in team edit/info views - PR #25144, PR #25156

- Fix team model update 500 due to unsupported Prisma JSON path filter - PR #25152

- Team model-group name routing fix - PR #24688

- Modernize teams table - PR #24189

- Team-member budget duration on create - PR #23484

- Add missing

team_member_budget_durationparam tonew_teamdocstring - PR #24243 - Fix teams table refresh, infinite dropdown, and leftnav migration - PR #24342

-

Usage + Analytics

-

Models + Providers

-

Guardrails UI

-

MCP Toolsets UI

- New Toolsets tab for curated MCP tool subsets with scoped permissions - PR #25155

-

Auth / SSO

-

UI Cleanup / Migration

- Migrate Tremor Text/Badge to antd Tag and native spans - PR #24750

- Migrate default user settings to antd - PR #23787

- Migrate route preview Tremor → antd - PR #24485

- Migrate antd message to context API - PR #24192

- Extract

useChatHistoryhook - PR #24172 - Left-nav external icon - PR #24069

- Vitest coverage for UI - PR #24144

Bugs

- Fix logs page showing unfiltered results when backend filter returns zero rows - PR #24745

- Fix UI logs filter - PR #23792

- Fix edit budget flow - PR #24711

- Fix bulk update - PR #24708

- Fix user cache invalidation - PR #24717

- Fix guardrail mode type crash - PR #24035

- Sanitize proxy inputs - PR #24624

AI Integrations

Logging

-

General

- Centralize logging kwarg updates via a single update function - PR #23659

- Fix failure callbacks silently skipped when customLogger is not initialized - PR #24826

- Eliminate race condition in streaming

guardrail_informationlogging - PR #24592 - Use actual

start_timein failed request spend logs - PR #24906 - Harden credential redaction and stop logging raw sensitive auth values - PR #25151, PR #24305

- Filter metadata by

user_id- PR #24661 - Batch metrics improvements - PR #24691

- Filter metadata hidden params in streaming - PR #24220

- Shared aiohttp session auto-recovery - PR #23808

- Deferred guardrail logging v2 - PR #24135

Guardrails

- Register DynamoAI guardrail initializer and enum entry - PR #23752

- Extract helper methods in guardrail handlers to fix PLR0915 - PR #24802

- Add optional

on_errorfallback for guardrail pipeline failures - PR #24831, PR #25150 - Allow teams to attach/manage their own guardrails from team settings - PR #25038

- Project-level guardrail config in create/edit flows - PR #25100

- Return HTTP 400 (vs 500) for Model Armor streaming blocks - PR #24693

- Deferred guardrail logging v2 - PR #24135

- Eliminate race condition in streaming

guardrail_informationlogging - PR #24592 - Model-level guardrails on non-streaming post-call - PR #23774

- Guardrail post-call logging fix - PR #23910

- Missing guardrails docs - PR #24083

Prompt Management

- Environment + user tracking for prompts (

development/staging/production) in CRUD + UI flows - PR #24855, PR #25110 - Prompt-to-responses integration - PR #23999

Secret Managers

- No new secret manager provider additions in this release.

Spend Tracking, Budgets and Rate Limiting

- Enforce budget for models not directly present in the cost map - PR #24949

- Per-model rate limits in team settings/info UI - PR #25144, PR #25156

- Prometheus organization budget metrics - PR #24449

- Prometheus spend metadata - PR #24434

- Fix unversioned Vertex Claude Haiku pricing entry to avoid

$0.00accounting - PR #25151 - Fix budget/spend counters - PR #24682

- Project ID tracking in spend logs - PR #24432

- Dynamic rate-limit pre-ratelimit background refresh - PR #24106

- Point72 limits changes - PR #24088

- Model-level affinity in router - PR #24110

MCP Gateway

- Introduce MCP Toolsets with DB types, CRUD APIs, scoped permissions, and UI management tab - PR #25155

- Resolve toolset names and enforce toolset access correctly in Responses API and streamable MCP paths - PR #25155

- Switch toolset permission caching to shared cache path and improve cache invalidation behavior - PR #25155

- Allow JWT auth for

/v1/mcp/server/*sub-paths - PR #24698, PR #25113 - Add STS AssumeRole support for MCP SigV4 auth - PR #25151

- Tag query fix + MCP metadata support cherry-pick - PR #25145

- MCP REST M2M OAuth2 flow - PR #23468

- Upgrade MCP SDK to 1.26.0 - PR #24179

- Restore MCP server fields dropped by schema sync migration - PR #24078

Performance / Loadbalancing / Reliability improvements

- Add control plane for multi-proxy worker management - PR #24217

- Make DB migration failure exit opt-in via

--enforce_prisma_migration_check- PR #23675 - Return the picked model (not a comma-separated list) when batch completions is used - PR #24753

- Fix mypy type errors in Responses transformation, spend tracking, and PagerDuty - PR #24803

- Fix router code coverage CI failure for health check filter tests - PR #24812

- Integrate router health-check failures with cooldown behavior and transient 429/408 handling - PR #24988, PR #25150

- Add distributed lock for key rotation job execution - PR #23364, PR #23834, PR #25150

- Improve team routing reliability with deterministic grouping, isolation fixes, stale alias controls, and order-based fallback - PR #25148, PR #25154

- Regenerate GCP IAM token per async Redis cluster connection (fix token TTL failures) - PR #24426, PR #25155

- Proxy server reliability hardening with bounded queue usage - PR #25155

- Auto schema sync on startup - PR #24705

- Kill orphaned Prisma engine on reconnect - PR #24149

- Use dynamic DB URL - PR #24827

- Migration corrections - PR #24105

Documentation Updates

- MCP zero trust auth guide - PR #23918

- Week 1 onboarding checklist - PR #25083

- Remove

NLP_CLOUD_API_KEYrequirement fromtest_exceptions- PR #24756 - Update

gemini-2.0-flashtogemini-2.5-flashintest_gemini- PR #24817 - HA control-plane diagram clarity + mobile rendering updates - PR #24747

- Document

default_team_paramsin config reference and examples - PR #25032 - JWT to Virtual Key mapping guide - PR #24882

- MCP Toolsets docs and sidebar updates - PR #25155

- Security docs updates and April hardening blog - PR #24867, PR #24868, PR #24871, PR #25102

- Security incident blog - PR #24537

- Security townhall blog - PR #24692

- WebRTC blog - PR #23547

- Vanta announcement - PR #24800

- Prompt caching Gemini support docs - PR #24222

- OpenCode / reasoningSummary docs - PR #24468

- Thinking summary docs - PR #22823

- v0 docs contributions - PR #24023

- Blog posts RSS update - PR #23791

- General docs cleanup + townhall announcements - PR #24839, PR #25021, PR #25026

Infrastructure / Security Notes

- Optimize CI pipeline - PR #23721

- Add zizmor to CI/CD - PR #24663

- Remove

.claude/settings.jsonand block re-adding via semgrep - PR #24584 - Harden npm and Docker supply chain workflows and release pipeline checks - PR #24838, PR #24877, PR #24881, PR #24905, PR #24951, PR #25023, PR #25034, PR #25036, PR #25037, PR #25136, PR #25158

- Resolve CodeQL/security workflow issues and fix broken action SHA references - PR #24815, PR #24880, PR #24697

- Pin axios and tool versions - PR #24829, PR #24594, PR #24607, PR #24525, PR #24696

- Re-add Codecov reporting in GHA matrix workflows - PR #24804, PR #24815

- Fix(docker): load enterprise hooks in non-root runtime image - PR #24917, PR #25037

- OSSF scorecard workflow - PR #24792

- Skip scheduled workflows on forks - PR #24460

- CI/CD improvements - PR #24839, PR #24837, PR #24740, PR #24741, PR #24742, PR #24754

- Remove neon CLI dependency - PR #24951

- Workflow deletions - PR #24541

- Publish to PyPI migration - PR #24654

- Poetry lock / content-hash checks - PR #24082, PR #24159

- Apply Black formatting to 14 files - PR #24532, PR #24092, PR #24153, PR #24167, PR #24173, PR #24187

- Fix lint issues - PR #24932

- Version bump to 1.83.0 - PR #24840

- Test cleanup and reliability fixes - PR #24755, PR #24820, PR #24824, PR #24258

- License key environment handling - PR #24168

- Remove phone numbers from repo - PR #24587

New Contributors

- @voidborne-d made their first contribution in https://github.com/BerriAI/litellm/pull/23808

- @vanhtuan0409 made their first contribution in https://github.com/BerriAI/litellm/pull/24078

- @devin-petersohn made their first contribution in https://github.com/BerriAI/litellm/pull/24140

- @benlangfeld made their first contribution in https://github.com/BerriAI/litellm/pull/24413

- @J-Byron made their first contribution in https://github.com/BerriAI/litellm/pull/24449

- @jaydns made their first contribution in https://github.com/BerriAI/litellm/pull/24823

- @stuxf made their first contribution in https://github.com/BerriAI/litellm/pull/24838

- @clfhhc made their first contribution in https://github.com/BerriAI/litellm/pull/24932

Full Changelog: https://github.com/BerriAI/litellm/compare/v1.82.3-stable...v1.83.3-stable

04/04/2026

- New Models / Updated Models: 59

- LLM API Endpoints: 28

- Management Endpoints / UI: 61

- Logging / Guardrail / Prompt Management Integrations: 30

- Spend Tracking, Budgets and Rate Limiting: 11

- MCP Gateway: 8

- Performance / Loadbalancing / Reliability improvements: 17

- Documentation Updates: 24

- Infrastructure / Security: 50